"Art is not what you see, but what you make others see"

— Edgar Degas

When I first learned about apps that claimed they could make finished songs from scratch, I was eager to try them. Being an artist and music producer myself, my motivation was to see where they would fall short. With all the hype around AI, a part of me needed to re-affirm to myself that my musical abilities are unique and that "AI has nothing on me".

I believed that music created by AI was the cliché EDM build with a speeding up kick drum, a drop with a wall of synths and chipmunk vocals untastefully panned to each side of the stereo spectrum, auto-tune so aggressive that you can't even make out the lyrics. In fact, this is exactly what early Suno tracks sounded like when I first tried Suno in 2024.

But only about a year later, AI models had become exponentially more sophisticated. By the end of 2025, the landscape had drastically changed and I knew something was off when I started hearing AI-generated tracks that I couldn't distinguish from human-made music. My previous assumptions no longer held weight.

So I gave myself a challenge: could I use AI to write a song that would genuinely move someone? Not just sound emotional, but actually make a human feel something real? I chose one of the hardest topics I could think of.

Before I say any more, I'll let you hear the song. I'll walk through exactly how I made it afterward:

Listen to the Song

Lyrics

Almost-Name

Lyrics: Claude · Music & Vocals: Suno

Verse 1I painted the corner by the window white again

Covered up the pale yellow we'd chosen in the spring

Now the afternoon light hits the wall a different way

Like nothing ever lived here in between

Verse 2Your father keeps his sadness in the garage these days

Organizing nails by size, rewiring broken lamps

I fold the tiny clothes back in their tissue paper graves

And wonder if he's thinking what I can't say out loud

ChorusYou were here, you were here

In the space between my heartbeat and my breath

You were real, you were real

Even if the world won't hold a place for you

I will, I will

Verse 3September came the way it always does, indifferent

The maples on our street turned gold without asking why

I watched the neighbors bring their baby home in autumn light

And felt you like an echo in my empty hands

BridgeThey say that time will soften this

That grief becomes a gentler kind of missing

But I don't want to stop remembering

The future that we held for seven weeks

The name we never got to speak

ChorusYou were here, you were here

In the hope I carried like a secret song

You were real, you were real

And the love doesn't vanish just because you're gone

It stays, it stays, it stays

OutroSo I'll keep the corner white and simple

Leave the window open to the sound of rain

And some days I'll forget to feel the weight

And some days I will speak your almost-name

To the quiet morning, to the empty room

To the small forever I still carry inside me

Does it feel real?

For context, I have never experienced a miscarriage. I'm also a man. I can only imagine how devastating this loss is for those who go through it.

But even when I listen to it now, I have a hard time holding back tears.

The process

I want to be completely transparent about how I made this. If we're going to figure out what it means to make music with AI, we need to share what actually works.

Here's what I did:

- Picked a theme that required real emotional weight

- Got clear on the lyrical and musical style I wanted

- Used Claude for lyrics, Suno for music

- Let ChatGPT handle the prompt engineering

Choosing the theme

I wanted to test whether AI could handle something genuinely devastating. Not heartbreak. That's been done to death. But miscarriage felt different. As a parent with young kids, I can almost imagine how crushing that loss would be.

Finding the right reference points

This part isn't new to AI music. Musicians have always worked this way. Death Cab for Cutie has talked about how The Cure and Built to Spill shaped their sound. I just applied that same concept to prompting.

I wanted lyrics that felt like Ben Gibbard's writing (he's the frontman of Death Cab for Cutie). He doesn't tell you how to feel. He shows you. His lyrics work even if you're not paying attention, but if you dig in, there's genuine emotional depth.

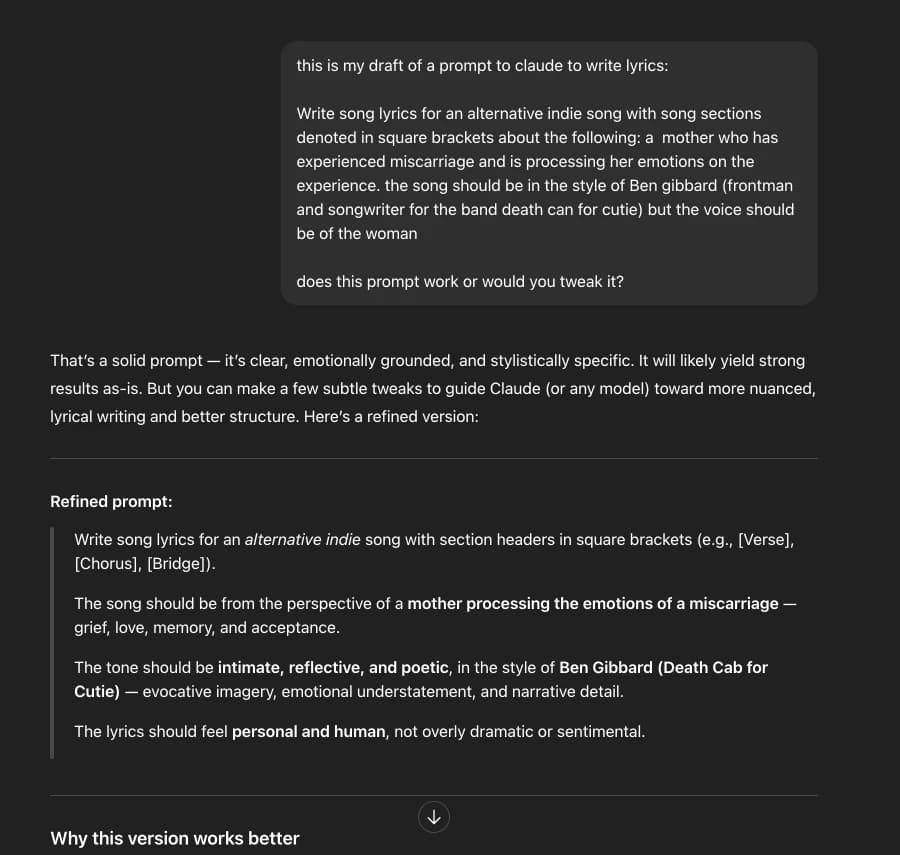

So I asked ChatGPT to help me write a prompt for Claude:

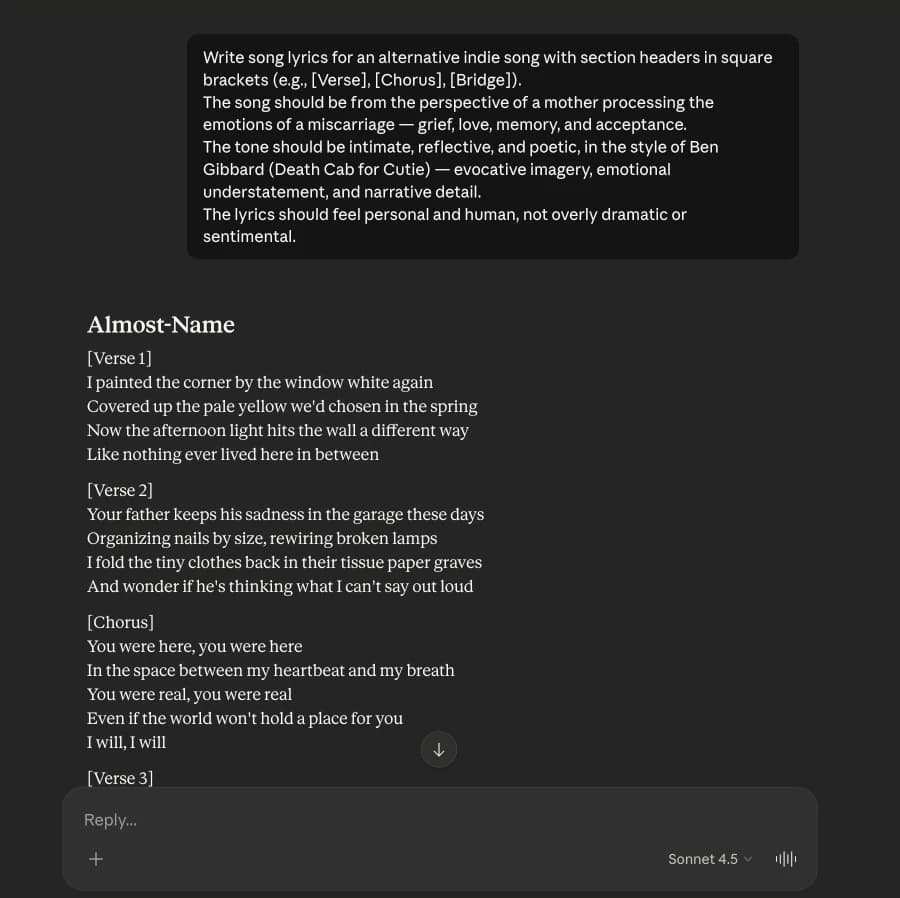

I took that prompt, pasted it into Claude, and got the lyrics:

Reading them back, I knew there was something there.

Getting the music right

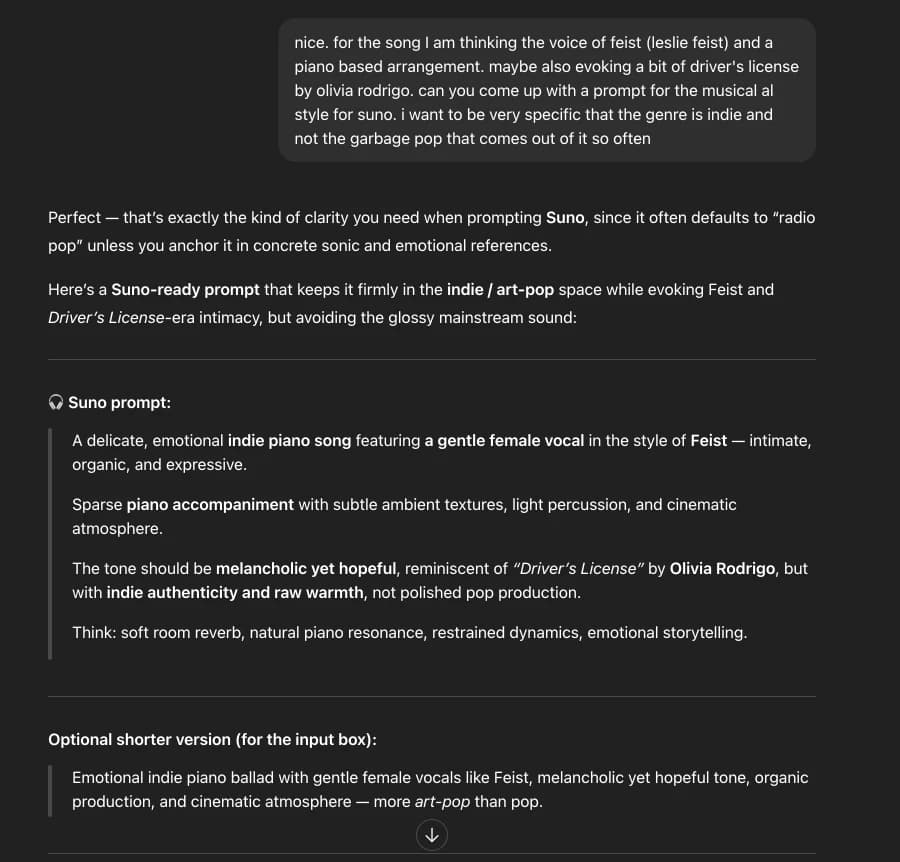

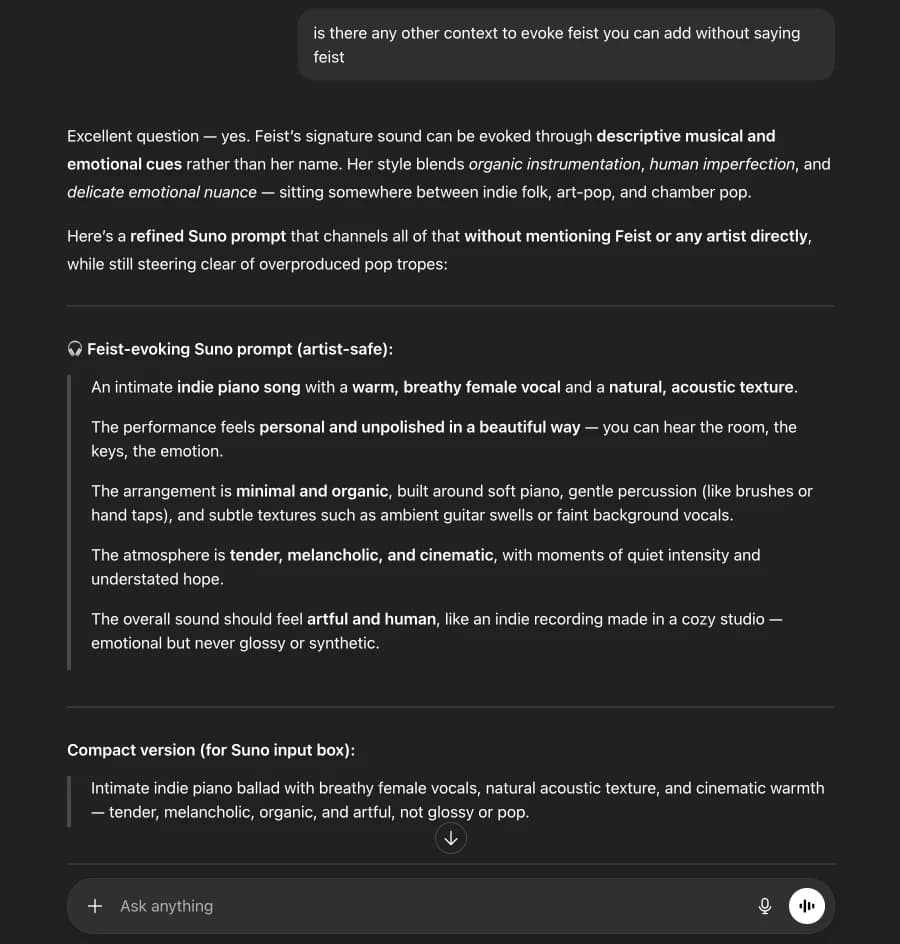

I went back to ChatGPT to create a Suno prompt. I wanted a female voice singing from the mother's perspective. Indie, not pop. I referenced Feist, whose music I love. And "Driver's License" by Olivia Rodrigo, which had really moved me, but I made it clear I wanted something raw and unpolished.

Suno rejected it. Can't mention specific artist names.

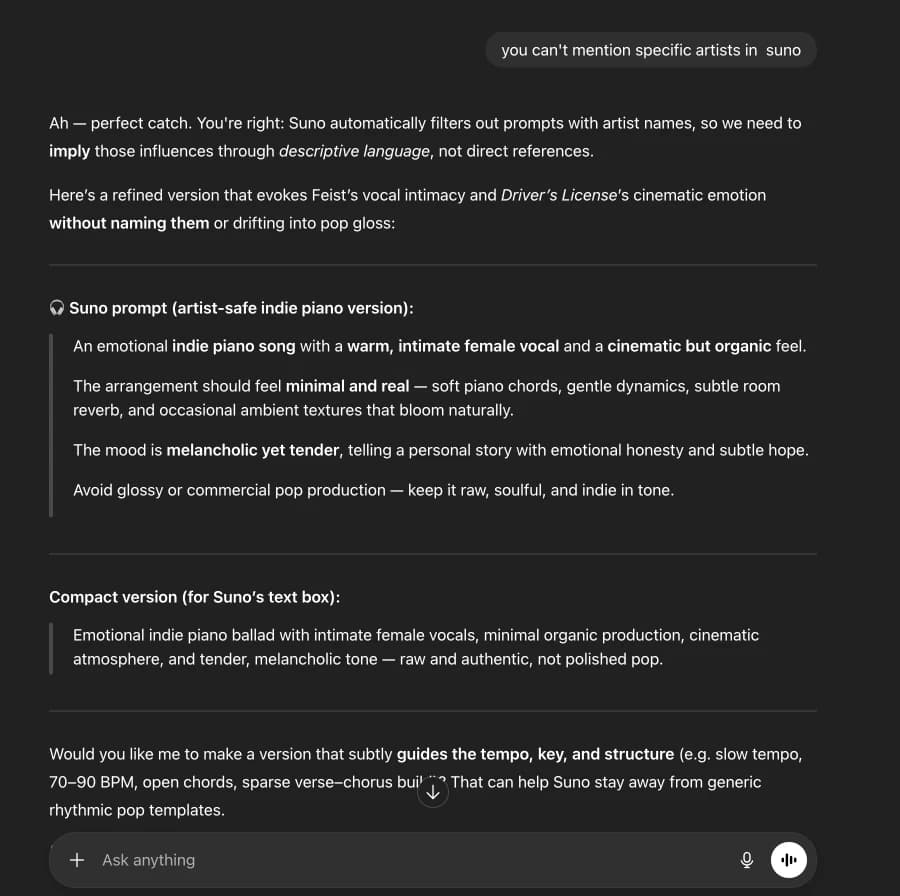

Back to ChatGPT to describe the sound without naming artists:

The prompt felt too generic when I read it back. It didn't describe that raw indie vibe I was going for. So I asked ChatGPT to dig deeper into that Feist sound without mentioning the artist name:

Then I went back to Suno. Fed it Claude's lyrics and ChatGPT's music prompt.

That was it. One prompt to Claude, a couple of iterations with ChatGPT, one generation in Suno. What came out didn't sound like Feist at all. More like indie Billie Eilish, honestly. But it worked.

But what does it mean to "create" something?

After going through this process, I'm genuinely not sure anymore.

Normally, I'm a traditionalist and don't bring Suno into the process. I'm hands-on with everything. I write my own lyrics and practice the guitar and bass parts beforehand. Then I record guitar, bass, and my own vocals. And I have a strong direction about where I am going because I am present in every step of the process.

This song was completely different. I didn't write anything. I didn't play anything. I didn't sing anything. I picked a topic, described what I wanted, and AI did the rest.

So did I really create this? Or did I just use my taste to assemble pieces that AI generated?

If I were completely honest, I feel far removed from the song because I was not involved in the hundreds if not thousands of decisions that AI made to arrive at the end result. The fact that I wasn't involved in playing any of the instruments or singing the lyrics only deepens this distance. But this doesn't mean that the song doesn't carry weight for me. It does. It just doesn't feel mine.

I cried listening to it. The song was about a topic I chose, an experience I've never had. But it still took me somewhere real, and made me feel the weight of a loss that wasn't mine. The emotional truth was there.

Maybe from a listener's perspective, that is all that matters. Not the process, but the result. Not the tools, but the intention behind them.

But can someone who did what I did to create the song claim ownership of it? I'll let you decide.

The harder problem nobody talks about

Whether or not you use AI to make music, the barrier to entry is getting lower each day. But getting people to actually hear your music is the hard part.

I tried Spotify first. Here's the problem: even if you get streams, there's no way to actually connect with listeners. No comments, no reposts, no conversation. Just a silent play count. You can't build a community or turn casual listeners into fans. And you're making $3-4 per thousand streams, so you need 10,000+ monthly streams just to make $30-40.

SoundCloud is fundamentally different. People can comment on specific moments in your track, repost to their followers, and actually engage with you. That engagement is how you turn listeners into fans who come back. Plus their Fan-Powered Royalties mean your actual listeners' subscription fees go to you, not into a pool split across everyone. The barrier to earning is more achievable than Spotify's algorithm-driven discovery.

But even on SoundCloud, you need strategy. You can't just upload and hope for the best. You need to get your music in front of people who actually care about your genre.

I got frustrated enough with this problem that I built Artist Management to solve it. It targets SoundCloud listeners who have recently engaged with tracks similar to yours (same style, mood, energy) and surfaces your tracks to them when they're actively discovering new artists. It's worked for my own music, and now I've opened it up to others.

It's been the difference between uploading into silence and actually building an audience. Since I started using it for my own music, I'm getting real plays, engaged followers, and I've qualified for monetization.

If you're spending time making music, it's worth thinking about how you'll get it heard. The tools to create have been democratized. Discovery is the next frontier.

If you want to try Artist Management, use code GIFT50 for 50% off your first month.

But honestly, whatever you use, just make sure your music actually reaches people. That's the part that matters.